|

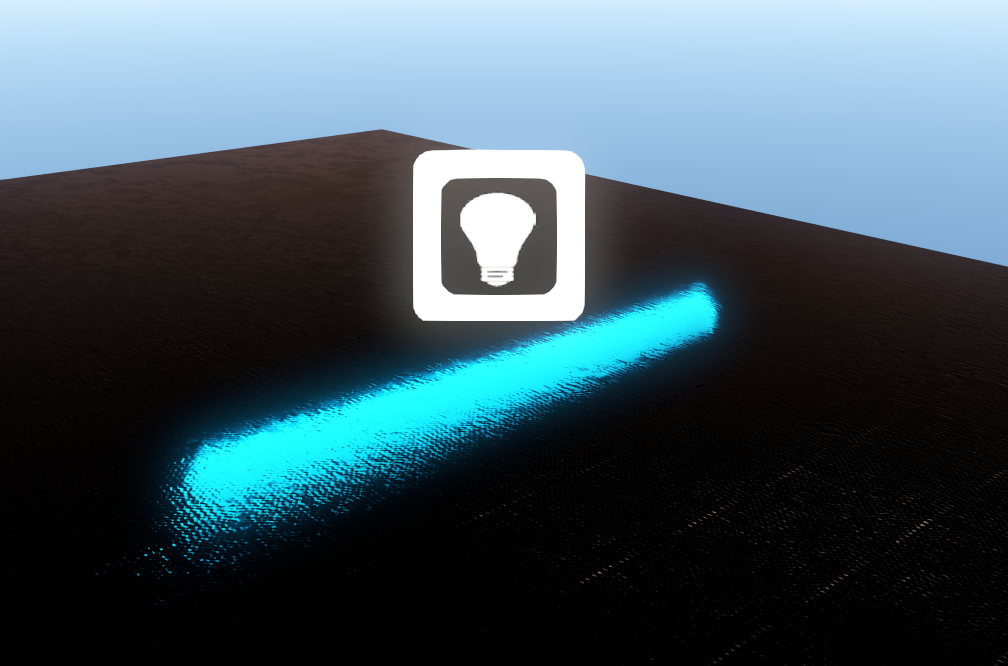

As you might have already guessed LipsEngine 2.0 uses a forward shading/rendering approach. In my first blog post about the future of lipsengine I already had several things in mind like for example more freedom of material properties, anisotropic specular highlights and to not be bound by some kind of G-Buffer that brings its own problems like Alpha Blended geometry and so on... After having worked solely with Deferred Renderers for the last 2 years I wanted to try something different that does not trap you in the same way that Deferred Renderer's do, forcing you to make workarounds for transparency which is what most commercial engine's out there today still have to do (CryEngine, UnrealEngine, Unity,...). And the problem's keep adding up since you not only have to build a separate forward pipeline for the alpha blended geometry but also try to "somehow" make all the lighting match up and still be consistent throughout the scene which can be quite a challenge sometimes. So I decided early on that I wanted to try Tiled-Forward Shading (aka Forward+), first seen in the AMD Leo demo I believe. My implementation is based on the most recent AMD SDK sample that incorporates Point & Spotlights and a few additional features & optimizations like the support of transparent geometry or mitigation of depth continuities. The process works the following: 1. You have to perform a Depth-Pre-Pass (This is required as to reduce overdraw / redundant shading and to supply the following compute shader with data for culling). 2. The culling pass using a compute shader. How this works is that it divides the screen into a list of tiles. The compute kernel is run so that each thread-group corresponds to each tile so that each tile later contains a list of indices into a global light index buffer. Each thread-group calculates an asymmetric frustum in view-space that fits around the tile. A min and max depth bound is then calculated using the depth information of the previous pass to fit it more tightly around the scene geometry. This final frustum is then tested against the light's bounding sphere using a buffer containing the center and radius. 3. Forward shaded material/lighting pass. This is a fairly regular forward shading pass that finds out which tile corresponds to the current shaded pixel and obtains all indices of the lights that it contains. After that we just perform the regular light shading. Another thing I've been working on are Area-Lights. As you might or might not know in real-life every light source has an area that is reflecting or emitting light. There's no such thing as a 'point light', meaning light emitting from an infinitely small point in space. So the recent research into area lights has been a welcome addition to modern realtime rendering. I've implemented a spherical light and a tube light based on the representative point method presented in Brian Karis's siggraph 2013 presentation. Basically what we do is calculate the closest intersection point of the reflection ray and the geometry and so modify only the cosine between the surface normal N and the light vector L to 'broaden' its distribution. Here's a screenshot and some videos showcasing the lighting system (yes I know still no shadows...yet ^^): Videos:Comments are closed.

|

Archives

May 2016

AuthorA guy who's passionate about graphics programming and has fun coding engine/tools Categories |

RSS Feed

RSS Feed